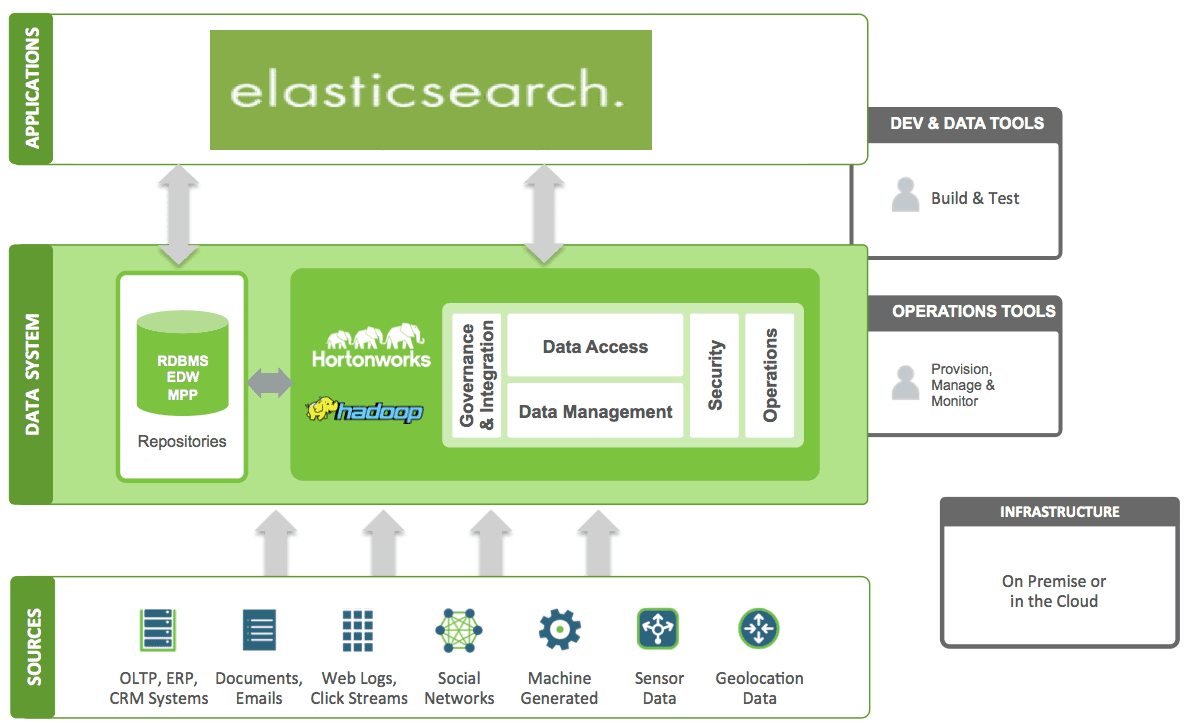

Right now when i run this query it returns the name, lastname, address and gender for dave and i want to put the results into a csv on my desktop when i run the query. To move data from an existing table to S3, one can run: ALTER TABLE oldtable ENGINES3 COMPRESSIONALGORITHMzlib To get data back to a normal table one can do: ALTER TABLE s3table ENGINEINNODB New Options for ALTER TABLE. In this tech talk, learn how to use cold storage to retain any amount of data while reducing cost per GB to near Amazon S3 storage prices. Using DataSync to synchronize data that was written by a utility other than DataSync in which the storage class is: S3 Standard, S3 Intelligent-Tiering (Frequent Access or Infrequent Access tiers), S3 Standard-IA, or S3 One Zone-IA. # Replace the following Query with your own Elastic Search Query Create a s3 bucket by using AWS S3 service and create a directory like logs as shown in below figure. be able to use it even in the Jupyter no module named Elasticsearch jupyter. This is my first time using Elasticsearch and cURL so i am confused on how to do this. For this, First we need to create a s3 bucket. AWS Glue uses PySpark to include Python files in AWS Glue ETL jobs. As a 'staging area' for such complementary backends, AWS's S3 is a great fit. You can view log data, export it to S3, or process it in real time. While Elasticsearch can meet a lot of analytics needs, it is best complemented with other analytics backends like Hadoop and MPP databases. Amazon OpenSearch Service domains are Elasticsearch (1.5 to 7.10) or OpenSearch.

I am trying to export the results that is found using the below query into a CSV on my desktop. Here Elasticsearch is an open sourcedistributed real-time search backend.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed